Part 3: Web3 Data -> Cloud ML Pipelines (Spark in Practice)

📚 Series Navigation

👉 Part 1: AI, Blockchain, and Cloud: Who Actually Does What?

👉 Part 2: Why Fully Decentralized AI Is (Mostly) a Myth

👉 Part 3: Web3 Data -> Cloud ML Pipelines (Spark in Practice)

👉 Part 4: AI for Blockchain Fraud & Anomaly Detection

👉 Part 5: Smart Contracts + AI Agents: Autonomous Systems

👉 Part 6: Auditable AI: Using Blockchain for Trust & Governance

Web3 Data -> Cloud ML Pipelines (Spark in Practice)

Why Blockchain Data Is Perfect for ML

Blockchains are:

- Append-only

- Time-ordered

- Public

- Behavior-rich

This makes them ideal for feature engineering because you can derive rates, burstiness, and counterparty diversity directly from the ledger.

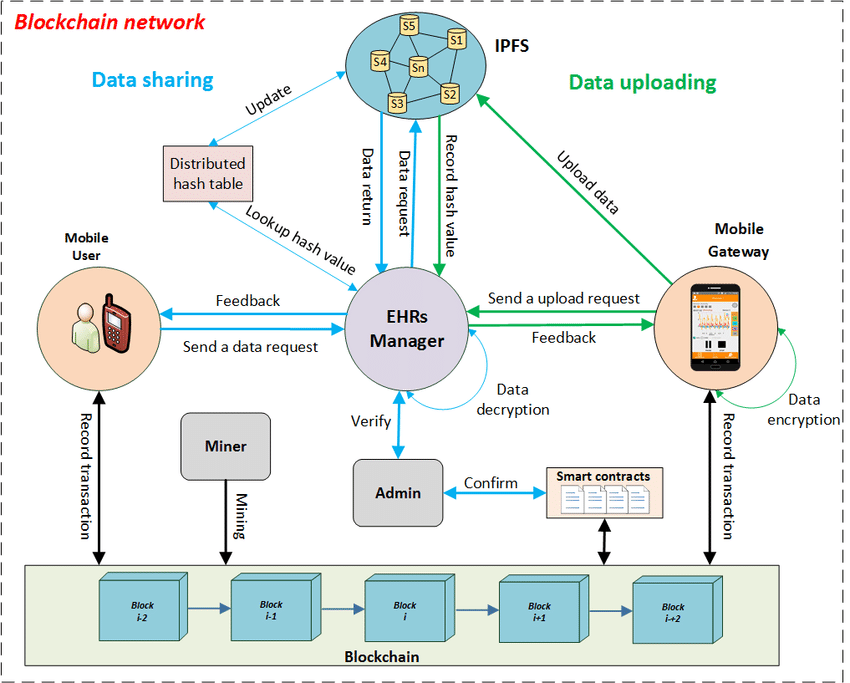

Reference Architecture

- Blockchain Node

- -> S3 (raw JSON)

- -> Spark (ETL + features)

- -> ML model

- -> Predictions on-chain

Treat the chain as the source of truth and let the cloud absorb the heavy compute.

PySpark Example

| |

Optional: commit scores on-chain (valid Python)

| |

This keeps outputs auditable without pushing full inference on-chain.

ML Applications

- Wallet risk scoring

- Whale detection

- Bot identification

- Market behavior analysis

Why Cloud Wins

Only cloud platforms provide:

- Elastic compute

- Distributed storage

- Mature ML tooling

Closing

Web3 generates data. Cloud turns it into intelligence, and the chain preserves the audit trail.