Part 2: Why Fully Decentralized AI Is (Mostly) a Myth

📚 Series Navigation

👉 Part 1: AI, Blockchain, and Cloud: Who Actually Does What?

👉 Part 2: Why Fully Decentralized AI Is (Mostly) a Myth

👉 Part 3: Web3 Data -> Cloud ML Pipelines (Spark in Practice)

👉 Part 4: AI for Blockchain Fraud & Anomaly Detection

👉 Part 5: Smart Contracts + AI Agents: Autonomous Systems

👉 Part 6: Auditable AI: Using Blockchain for Trust & Governance

Why Fully Decentralized AI Is (Mostly) a Myth

The Promise vs Reality

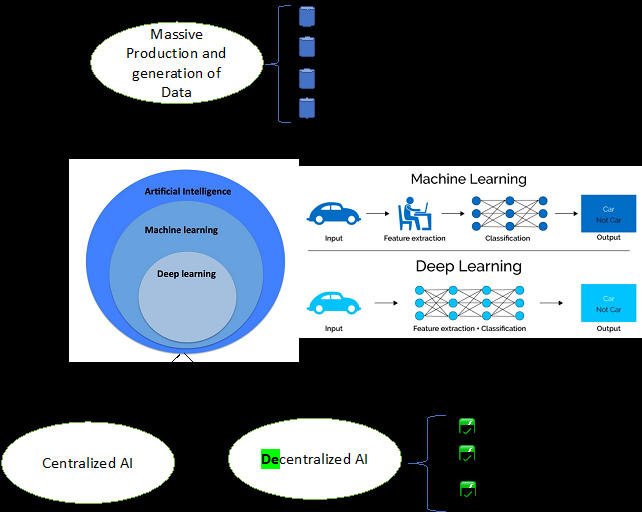

Decentralized AI promises trustless, censorship-resistant intelligence. The problem is physics and economics, not ideology. At scale, bandwidth, scheduling, and power costs dominate the design.

Hard Constraints Engineers Cannot Ignore

| Constraint | Why It Breaks DeAI |

|---|---|

| GPUs | Scarce, expensive, centralized |

| Latency | On-chain is not real-time |

| Cost | Inference at scale is costly |

| Tooling | ML stacks assume cloud |

These constraints show up immediately once you push beyond toy workloads, especially when you need consistent latency.

The GPU Problem

Training and inference require:

- High-bandwidth memory

- Fast interconnects

- Centralized scheduling

This naturally pushes AI workloads toward cloud hyperscalers.

What Actually Works

- Centralized inference

- Decentralized verification

- Token incentives for contributors

- Cryptographic proofs of output

The pattern is hybrid by design: compute where it is efficient, and verify where it is trust-minimized.

🧩 Case Study: Decentralized Inference Marketplace

A startup attempted token-incentivized GPU nodes. The result was inconsistent uptime, latency spikes, and a centralized fallback for reliability. Incentives helped utilization, but not the tail latency that production systems care about.

✅ Implementation Checklist

- Measure GPU economics

- Compare latency vs block time

- Separate governance decentralization from compute

⚖️ Tradeoffs

| Model | Pros | Cons |

|---|---|---|

| Centralized | Reliable | Trust needed |

| Fully DeAI | Ideologically pure | Unstable |

| Hybrid | Practical | Slightly complex |

Engineering Reality (Solidity)

| |

You do not decentralize GPUs. You decentralize trust in results.

Conclusion

Decentralized AI is not dead, but it will always be hybrid in production.

📚 Further Reading

- ZKML research papers

- Rollup architecture discussions