AI Meets Web3: Reality, Architecture, and the Future

Series Overview

👉 Part 1: AI, Blockchain, and Cloud: Who Actually Does What?

👉 Part 2: Why Fully Decentralized AI Is (Mostly) a Myth

👉 Part 3: Web3 Data -> Cloud ML Pipelines (Spark in Practice)

👉 Part 4: AI for Blockchain Fraud & Anomaly Detection

👉 Part 5: Smart Contracts + AI Agents: Autonomous Systems

👉 Part 6: Auditable AI: Using Blockchain for Trust & Governance

Part 1: AI, Blockchain, and Cloud: Who Actually Does What?

Introduction

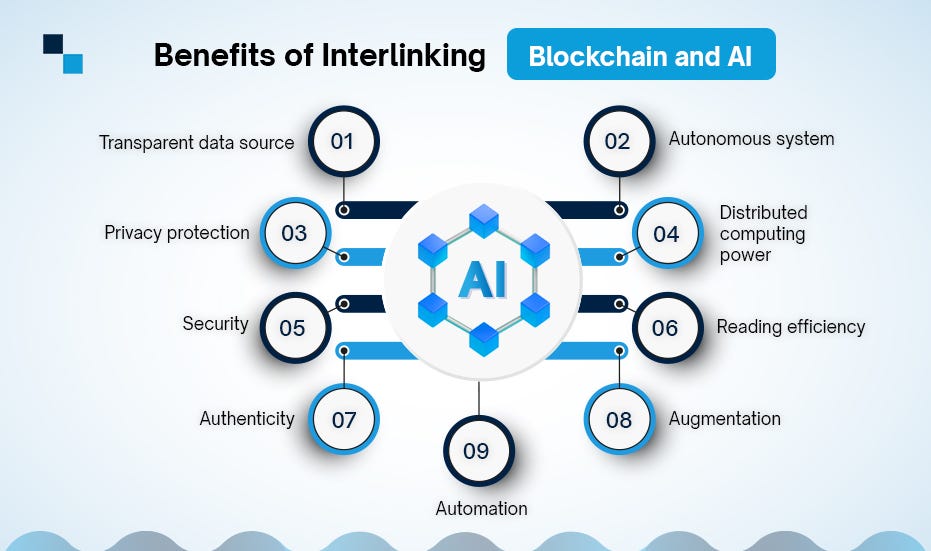

AI, blockchain, and cloud computing are often discussed as if they are competing paradigms. In reality, they solve quite different engineering problems. Confusion arises when teams try to force one technology to do the job of another. When that happens, systems get slower, more expensive, and harder to audit.

This article establishes a clear mental model for how these systems should work together in production.

The Core Responsibilities

| Layer | Responsibility | Why It Exists |

|---|---|---|

| AI | Prediction, classification, extraction | Intelligence |

| Blockchain | Immutability, ordering, verification | Trust |

| Cloud | Compute, storage, orchestration | Scale |

Key principle:

Any architecture that violates these boundaries will fail on cost, performance, or maintainability. Treat the boundaries as contracts, not suggestions.

Why Blockchain Is Not a Compute Engine

Blockchains are:

- Slow

- Deterministic

- Expensive per operation

Consensus trades speed for verifiability, which is exactly the opposite of what inference needs. They are excellent for verifying outcomes, not generating them.

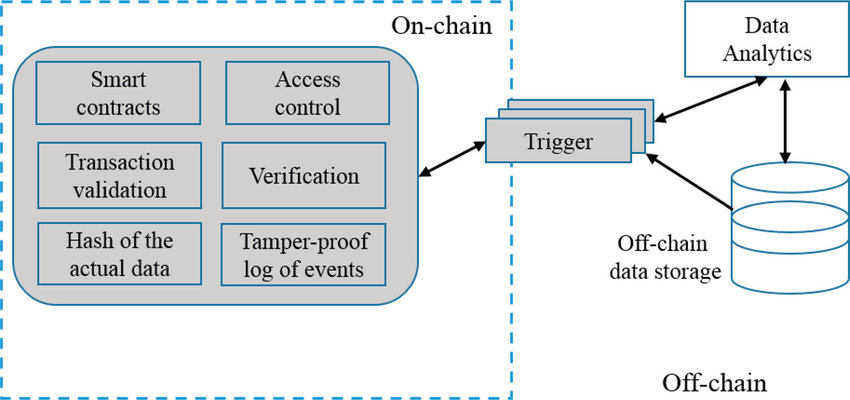

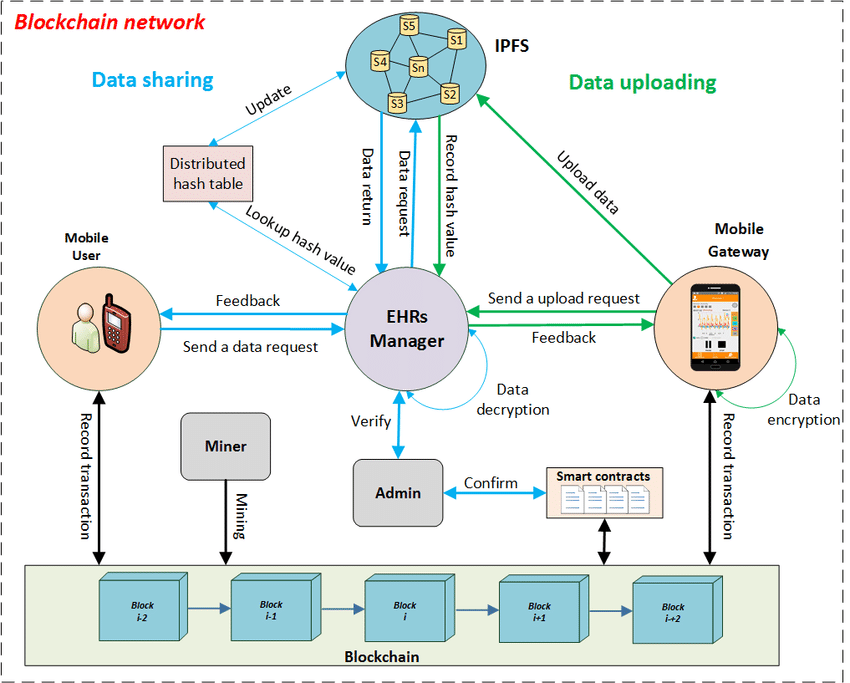

Practical Hybrid Architecture

What works in real systems:

- AI inference runs off-chain (cloud CPUs/GPUs)

- Outputs are hashed

- Hashes and metadata are stored on-chain

- Smart contracts verify integrity

This keeps heavy compute off-chain while preserving an auditable trail.

Minimal Code Example

Hashing the output creates a commitment that can be verified later without revealing the raw data.

AI Inference (Cloud)

| |

Smart Contract (Verification)

| |

When This Pattern Makes Sense

- Financial risk scoring

- Fraud detection

- Model governance

- Compliance-driven AI

Closing Thoughts

AI decides.

Blockchain verifies.

Cloud scales.

Trying to collapse these roles is an architectural mistake. Keep the boundaries crisp and the system stays debuggable.

📚 Further Reading

Part 2: Why Fully Decentralized AI Is (Mostly) a Myth

The Promise vs Reality

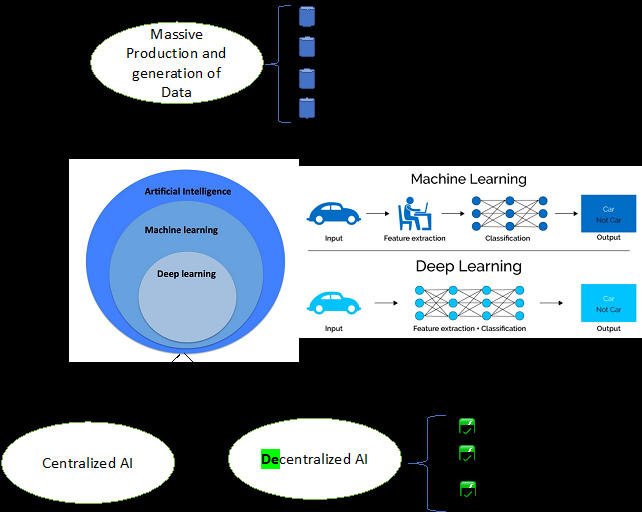

Decentralized AI promises trustless, censorship-resistant intelligence. The problem is physics and economics, not ideology. At scale, bandwidth, scheduling, and power costs dominate the design.

Hard Constraints Engineers Cannot Ignore

| Constraint | Why It Breaks DeAI |

|---|---|

| GPUs | Scarce, expensive, centralized |

| Latency | On-chain is not real-time |

| Cost | Inference at scale is costly |

| Tooling | ML stacks assume cloud |

These constraints show up immediately once you push beyond toy workloads, especially when you need consistent latency.

The GPU Problem

Training and inference require:

- High-bandwidth memory

- Fast interconnects

- Centralized scheduling

This naturally pushes AI workloads toward cloud hyperscalers.

What Actually Works

- Centralized inference

- Decentralized verification

- Token incentives for contributors

- Cryptographic proofs of output

The pattern is hybrid by design: compute where it is efficient, and verify where it is trust-minimized.

🧩 Case Study: Decentralized Inference Marketplace

A startup attempted token-incentivized GPU nodes. The result was inconsistent uptime, latency spikes, and a centralized fallback for reliability. Incentives helped utilization, but not the tail latency that production systems care about.

✅ Implementation Checklist

- Measure GPU economics

- Compare latency vs block time

- Separate governance decentralization from compute

⚖️ Tradeoffs

| Model | Pros | Cons |

|---|---|---|

| Centralized | Reliable | Trust needed |

| Fully DeAI | Ideologically pure | Unstable |

| Hybrid | Practical | Slightly complex |

Engineering Reality (Solidity)

| |

You do not decentralize GPUs. You decentralize trust in results.

Conclusion

Decentralized AI is not dead, but it will always be hybrid in production.

📚 Further Reading

- ZKML research papers

- Rollup architecture discussions

Part 3: Web3 Data -> Cloud ML Pipelines (Spark in Practice)

Why Blockchain Data Is Perfect for ML

Blockchains are:

- Append-only

- Time-ordered

- Public

- Behavior-rich

This makes them ideal for feature engineering because you can derive rates, burstiness, and counterparty diversity directly from the ledger.

Reference Architecture

- Blockchain Node

- -> S3 (raw JSON)

- -> Spark (ETL + features)

- -> ML model

- -> Predictions on-chain

Treat the chain as the source of truth and let the cloud absorb the heavy compute.

PySpark Example

| |

Optional: commit scores on-chain (valid Python)

| |

This keeps outputs auditable without pushing full inference on-chain.

ML Applications

- Wallet risk scoring

- Whale detection

- Bot identification

- Market behavior analysis

Why Cloud Wins

Only cloud platforms provide:

- Elastic compute

- Distributed storage

- Mature ML tooling

Closing

Web3 generates data. Cloud turns it into intelligence, and the chain preserves the audit trail.

📚 Further Reading

- MLflow Getting Started

- Apache Spark SQL Performance Tuning

- Apache Spark Tuning Guide

- Databricks Optimization Guide (Spark/Delta best practices)

Part 4: AI for Blockchain Fraud & Anomaly Detection

Fraud Is Behavioral

Most blockchain attacks do not break cryptography. They exploit human and system behavior. That means detection is about spotting deviations from normal activity, not finding a single magic signature.

Common Fraud Patterns

- Wash trading

- Sybil wallets

- Bot farms

- Flash-loan abuse

Feature Engineering Examples

| Feature | Signal |

|---|---|

| tx_rate | Automation |

| counterparty_entropy | Wallet diversity |

| value_variance | Manipulation |

These features are cheap to compute and hold up across chains.

Baseline anomaly detection (continuous scores; features defined)

| |

Use the continuous scores to rank alerts before applying thresholds.

Blockchain Integration

- Store scores on-chain

- Trigger smart-contract rules

- Maintain immutable audit trail

On-chain writes should be sparse: store decisions or summaries, not every feature.

Conclusion

AI detects. Blockchain enforces.

📚 Further Reading

- Isolation Forest (Liu, Ting, Zhou, 2008) — PDF

- Rekt News (exploit writeups)

- De.Fi REKT Database (exploit index)

- Ronin bridge security breach postmortem

- Poly Network hack analysis (Elliptic)

Part 5: Smart Contracts + AI Agents: Autonomous Systems

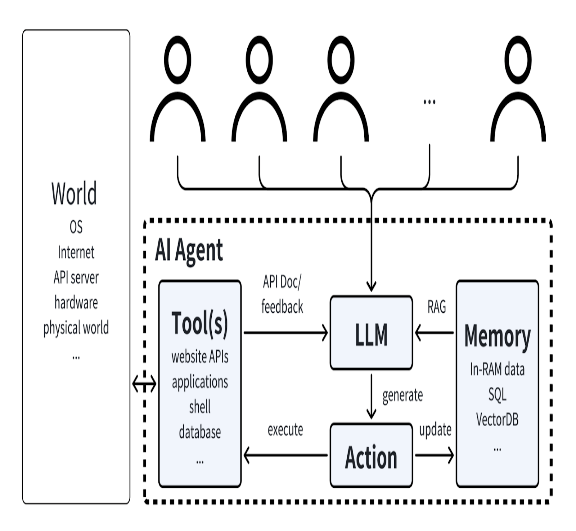

Smart contracts are deterministic enforcement engines. AI agents are adaptive decision engines. Combine them safely by treating agent output as untrusted input and enforcing guardrails on-chain. The chain should enforce invariants, not run the model.

🧩 Case Study: Autonomous Rebalancing With Hard Caps

An AI agent proposes rebalances off-chain. On-chain contracts enforce max exposure, max daily turnover, and an emergency pause. The guardrails keep failure modes bounded even when the model is wrong.

Architecture patterns

Proposal → Validate → Execute

- Agent proposes an action (signed payload)

- Contract validates caps/allowlists/thresholds

- Contract executes and emits audit events

Oracle / attestation pattern

Contracts accept signed risk attestations from authorized signers and enforce freshness windows + nonces. This keeps decisions off-chain while preserving accountability.

Guardrails (Solidity)

Exposure caps + risk threshold (baseline)

| |

Rate limiting (prevent fast mistakes)

| |

Circuit breaker / emergency pause

| |

Failure modes and mitigations

| Failure mode | Mitigation |

|---|---|

| Malicious/incorrect agent output | caps, allowlists, staged rollout |

| Oracle compromise | multiple oracles, medianization, bounds checks |

| Front-running / MEV | TWAP, slippage caps, commit-reveal |

| Reorgs / finality | confirm-finality thresholds, idempotent ops |

| Replay attacks | nonce + expiry + domain separation |

Governance patterns

- Multisig guardian for emergency pause and parameter updates

- DAO voting for policy-level changes (caps, allowlists, signer sets)

- Timelocks for upgrades

Governance is the safety net that turns an agent into a controlled system.

✅ Implementation Checklist

- Separate proposal (off-chain) from execution (on-chain)

- Validate caps/bounds/allowlists on-chain

- Add nonce + expiry to signed payloads

- Use rate limits, timelocks, and circuit breakers

- Emit audit events for every execution

⚖️ Tradeoffs

| Design | Pros | Cons |

|---|---|---|

| Fully autonomous | fast | risky without strong guardrails |

| Human approvals | safer | slower |

| Hybrid (recommended) | practical | more moving parts |

📚 Further Reading

- Smart contract security patterns (pause, timelock, allowlists)

- MEV/front-running mitigation writeups

- Oracle/attestation design patterns

Takeaway

Let AI propose, let contracts enforce, and let governance control parameters. The executeTrade snippet is only one guardrail pattern; production systems need caps, rate limits, attestations, and audit trails.

Part 6: Auditable AI: Using Blockchain for Trust & Governance

The Trust Problem

AI systems increasingly affect:

- Finance

- Credit

- Governance

- Compliance

But they are often opaque, which makes audits and incident response painfully slow.

Blockchain as an Audit Log

Store:

- Model hash

- Input hash

- Output hash

- Timestamp

- Signer

These fields create a tamper-evident chain of custody for model decisions.

Example Record

| |

Why This Matters

- Regulatory audits

- Post-incident analysis

- Model accountability

- Explainability

Final Takeaway

Blockchain does not make AI smarter. It makes AI answerable and reproducible.

Series Summary

| Technology | Role |

|---|---|

| AI | Intelligence |

| Blockchain | Trust |

| Cloud | Scale |

The future is not decentralized vs centralized. It is a world of architecturally honest hybrid systems.